Be careful, it’s Vegas out there

The cavernous room is lit in a dim, yet pleasing way. There are no windows, no clocks, no reminders of the outside world. It could be noon or it could be midnight outside. In here, there’s always time for one more round.

In front of you, a machine flashes like a video game. You watch the simulated wheels turn quickly at first and then they slow, one by one. The first wheel lands on the letter “W.” The next stops at “I.” The last rolls excruciatingly forward, passing worthless letter after worthless letter until you see the one you’ve been waiting for.

The letter “N” appears at the top of the screen and begins to move its way down toward the jackpot position. You watch as it slides ever so slowly toward the thing you’ve been playing for all night: WIN. You see that word completed on the screen for just an instant before the N ticks once more, out of position and back to worthlessness. You almost won big. Or so you think. In reality, you’ve just been manipulated.

Many years ago I recall reading about the incredible amount of planning that goes into making slot machines as addictive as possible. Their lights and bells are meticulously calibrated to keep you in a perpetual state of agitated excitement. The payouts are statistically measured and timed to give you just enough reward so that you keep on playing. And every once in a while, just often enough according to the latest behavioral science, the machine will let you think you almost won the big jackpot. How can you stop playing now when you were oh so close to cashing in?

It’s all carefully choreographed to relieve you of as much of your money as possible. Now those same tricks and tactics are coming to a website near you.

It’s funny, in an ironic kind of way. The internet has put all the world’s information at our fingertips. In exchange, we give the internet little bits of information about ourselves. At first that exchange was unambiguously to our advantage. But those little bits of data we hand over with every click and transaction are accumulating into frighteningly accurate portraits of our personal lives. Moreover, this deep knowledge about our habits and behaviors is giving surprising power to people we’ll never meet.

The New York Times ran a fascinating article about how the large retail chain, Target, used its database to predict which of its customers were pregnant.

Target “was able to identify about 25 products that, when analyzed together, allowed them to assign each shopper a “pregnancy prediction” score. More important, they could also estimate her due date to within a small window, so Target could send coupons timed to very specific stages of her pregnancy.”

Of course, not all pregnancies are perceived as bundles of joy. Some are even kept secret; at least from those closest to us. Just not from faceless retailers whose constant barrage of baby related promotions to a teenage girl sent her father into a rage.

“My daughter got this in the mail!” he said to a Target store manager according to The Times. “She’s still in high school, and you’re sending her coupons for baby clothes and cribs? Are you trying to encourage her to get pregnant?”

Oops. Target apparently thought that train had already left the station. And it probably had.

That incident happened more than two years go. It’s safe to assume that the sophistication of Target’s data collection and analysis have grown at least as fast as technological progress more generally, which is to say extraordinarily fast.

In the same way that slot machines have evolved over the years from simple mechanical devices into manipulative supercomputers, modern marketing has moved beyond mass mailings and even targeted promotions to something that seems a bit more exploitive.

If you’re like me, you’ve probably grown accustomed to seeing ads pop up for things you’ve recently searched for online. When I looked at some camera equipment on Amazon a couple weeks back I wasn’t terribly surprised to find other unrelated websites promoting the same gear.

But that tactic is downright passive compared to what’s been happening more recently. Instead of just deluging me with ads for products I’ve researched online I’m not getting offered discounts on those same products, but with an important catch. The discounts are only valid if I spend more than I originally planned.

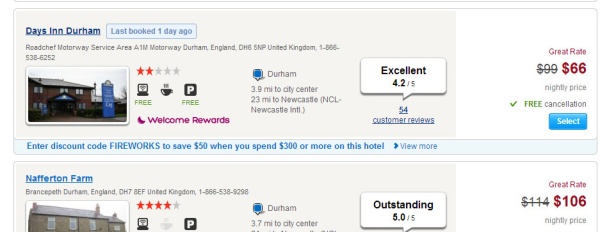

Hotels.com discount codes are carefully calibrated to get you to spend more money

Hotels.com has done this to me in both general and very specific ways. They know quite a bit about my travel habits; how often I book hotels, how long I normally stay, and how much I typically spend. So do you think it was a coincidence that they offered me a discount coupon valid for bookings that cost just a bit more than my ordinary hotel budget?

It could have been, I guess, except that they did the same thing to me again only in a slightly different way. This time I had just completed searching for a hotel for four nights in Durham England. Along with the listing of available rooms Hotels.com offered up a promotion code for $50 off a stay at the Days Inn.

It seemed like a good deal until I clicked through to discover that the code required a purchase of $300 or more. Meanwhile my booking for that hotel would total just $264. I wouldn’t be able to use the discount unless I stayed an extra night. But if I extended my stay, I’d get that extra night nearly free.

I was so, so close to a really good deal. How could I stop now?

The similarities to the way slot machines manipulate people floored me.

In both cases Hotels was trying to induce me to spend more than I typically would. They figure, probably correctly, that if they can get me to spend a bit more than I’m used to, I’ll enjoy that higher spending level and make it my normal budget. And you can bet that once I’ve raised my spending threshold, I’ll receive even more promotions making those just slightly out of reach purchases seem like can’t miss deals.

For this ploy to work, though, Hotels.com – or any company – needs to know an awful lot about my particular spending habits. Thanks to “Big Data” they know that, and a whole lot more.

Unfortunately it gets worse. Last week news broke that Facebook had successfully altered people’s moods by tampering with their news feeds.

For one week in January 2012, data scientists skewed what almost 700,000 Facebook users saw when they logged into its service. Some people were shown content with a preponderance of happy and positive words; some were shown content analyzed as sadder than average. And when the week was over, these manipulated users were more likely to post either especially positive or negative words themselves.”

Why, you might ask, is Facebook trying to make us sad? One possible explanation is the huge body of evidence that emotions play a critical roll in how we make our decisions, and especially how we make our buying decisions. How we “feel” about brands, about ourselves, whether we’re currently happy or sad all influences what we buy and how much we buy.

If Facebook can selectively shift how we feel, they can sell that capability to marketers. Instead of just pitching us stuff targeted to our specific interests, and inducing us with cleverly calibrated coupons, now they may be able to tailor these techniques to exploit the very moods they helped create.

On the specific issue, yet more reinforcement for my decision never to use Facebook. On the general issue, it’s starting to seem that we need multiple online personas. The Internet is turning into a monster.

LikeLike

Reblogged this on digger666.

LikeLike

This is indeed quite scary. I am terrible for not protecting my data against being shared, and I’m quite sure I would have fallen for Hotels.com’s ploy. Not a nice feeling to realise that.

LikeLike

Not a nice feeling at all. I’ve seen several stories similar to this one detailing how good “big data” is becoming at figuring out who we are. While most (all?) of those stories were written in an alarmist tone none ever really laid out what we should be alarmed about. Now we’re beginning to see the first evidence that companies are starting to figure out how to use this information to “hack” or manipulate us, and that is something to be alarmed about.

LikeLike

Pretty clever stuff but equally disturbing. Am I that transparent?! Big brother just got bigger

LikeLike

I live out in the boonies, so the nearest store other than a WalMart (which I refuse to use) is at least an hour’s drive. To get to a real mall or other stores is closer to three hours. So, I essentially end up using Amazon quite a bit. I got truly annoyed at the emails flooding in about anything I had looked at and did the unsubscribe. Then I learned that they send the emails out by categories: featuring books, or electronics, etc. So then I had to unsubscribe to each one. I seriously trying to figure out a way to avoid Amazon altogether, but haven’t come up with a solution just yet. I’m already down to buying much less, not a bad idea, but still…

LikeLike

I don’t know that there is a solution. This is progress and we can no more roll it back than we can hold back the tides. But I think we’re approaching a point where maybe some limits need to be considered.

LikeLike

I can’t help but wonder what will happen next when they realize most folks tune them out.

LikeLike

Hi Brian & Shannon,

Great observation. I have an issue with the amount of access to my phone that is demanded in return for downloading most apps. Even a lot of fairly basic apps want access to my contacts list, camera, microphone, GPS, etc. With no option to opt out! Hence I don’t download the app. Which makes my smart phone a bit less functional than it might be. Frustrating.

Really enjoying following your European travels.

Cheers,

Jason & Rose

LikeLike

Hey guys. Hope you’re doing well.

Yeah, all the things these apps are potentially doing behind our backs is a whole other story.

LikeLike

Reblogged this on "OUR WORLD".

LikeLike

Leaving comments provides data for someone’s big data. Disqus won’t let me comment on blogs who use the service because I won’t agree to letting it “examine” my email accounts. It’s very intrusive.

I’m using Firefox with the ad blocker add on. I don’t see too much advertising on laptop. The tablet, however, is just covered in it – to the point where I don’t really see it anymore.

LikeLike

Great post. I am a psychologist and I guess I contribute to this “manipulation” with my work. I think there’s something to be said for using behavior science to craft experiences that people find engaging and enjoyable, and yeah, even to sell products. But as our abilities in consumer manipulation increase, it’s also increasingly important to define and follow an ethical code for how we collect and use consumer data. I liked your thoughts on the subject!

LikeLike

Hi Amy,

Thanks for your comments. You mention the importance of defining and following an ethical code in these matters. Does one exist? I pretty sure there isn’t anything mandated by any regulatory body, but is there some form of broadly accepted best practices employed by firms? I’m thinking it’s more like the wild west at the moment, but you’d know better than me.

LikeLike

I consider basic research ethics apply to my applied work, too–as an individual worker, I do my best to abide by my training. I work for a big company that also has a lot of its own oversight on how we use and store consumer data. One of the big issues to resolve, IMO, is how to make sure individual users own their data.

LikeLike

Well Facebook to me is nothing but a storage of my photos for my friends and relatives to see.. of my latest travel destinations and to let them know that I’m still alive. :>

But these internet behavior is indeed getting scarier.. but I don’t normally fall under these promos’ spells. I’m not a huge coupon fan.. but annoying still.

LikeLike